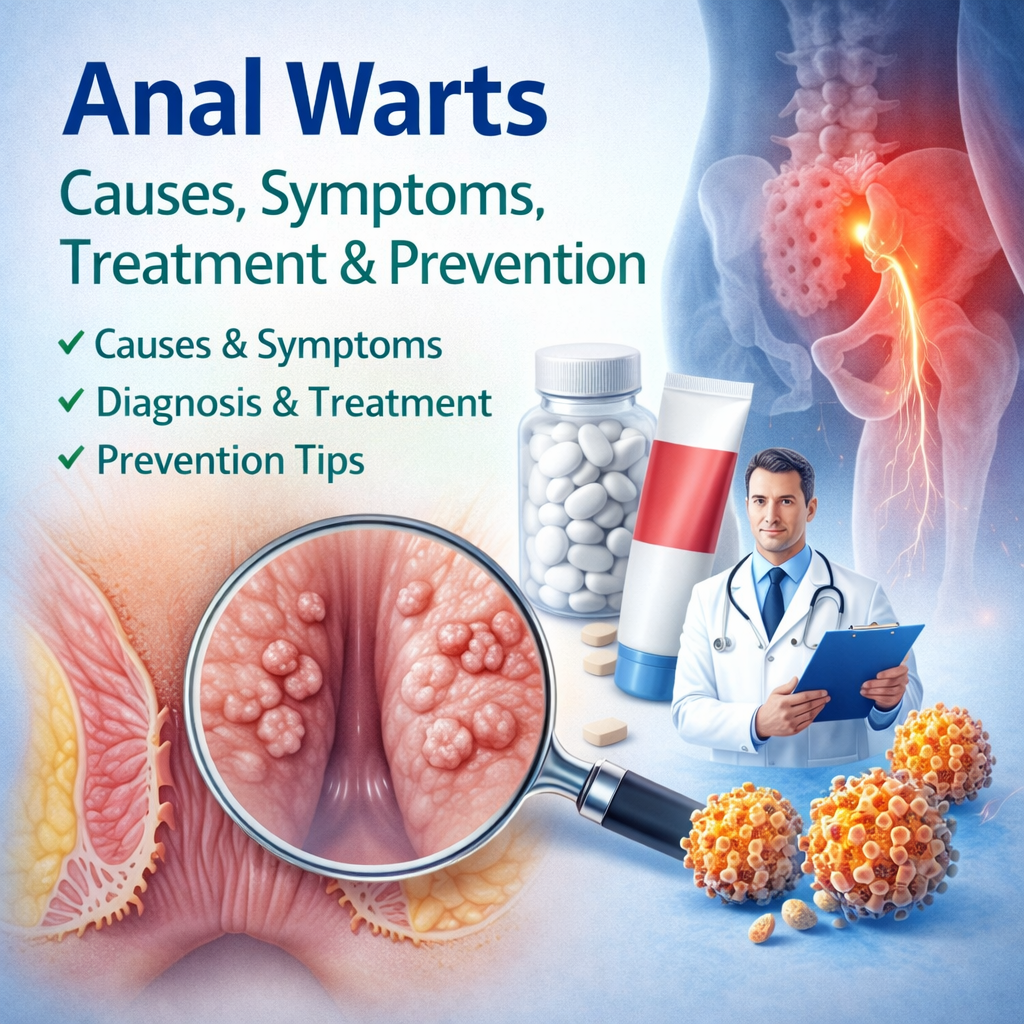

Health headlines are built to feel clear. A food “cuts risk”, a habit “adds years”, a product “raises the chance” of disease. The language is neat, memorable and often far more certain than the evidence behind it.

That is why one of the most useful skills in health reading is knowing the difference between correlation and causation. Once that distinction clicks, many dramatic claims lose their force. You can read with more confidence, less anxiety, and a sharper sense of what a study actually showed.

Two ideas that are close, but not the same

Correlation means two things appear together. If people who drink more coffee also seem to have lower rates of one illness, that is a correlation. It tells us there is a relationship worth noticing.

Causation is a stronger claim. It means one thing directly changes another. If coffee truly reduces disease risk, then coffee is not just travelling alongside the outcome. It is part of the reason the outcome changes.

In health research, that jump from “seen together” to “caused by” is where trouble starts. People are complicated. Habits cluster. Income, stress, work patterns, sleep, smoking, exercise, access to care, existing illness and medication use can all move in the background at the same time.

A person with regular sleep may also have a stable schedule, fewer chronic conditions and more predictable meals. A person who chooses diet drinks may already be trying to manage weight. A pregnant person taking pain relief may be taking it because of fever or inflammation, which may matter too. The supposed cause may not be the cause at all.

Correlation can still matter. It can point researchers towards real problems, useful clues and stronger future studies. It just cannot, on its own, settle the argument.

Why headlines often blur the line

Health reporting has to compress a lot of information into very little space. Study design, limitations and uncertainty do not always fit neatly into a short headline. Cautious phrases also tend to be less clickable than bold claims. “Linked to” sounds restrained. “Causes” sounds decisive.

That is why wording matters so much. A headline may turn a cautious paper into something far more direct than the researchers intended. Press releases can do it. Social posts can do it. So can the natural human urge to simplify a messy finding into one tidy message.

That single word, “associated”, is often the most honest part of a health story.

The table below is a useful shortcut when you are scanning a headline or summary.

| Headline wording | What it usually signals | What a careful reader should think |

|---|---|---|

| “Causes”, “prevents”, “cuts risk”, “leads to” | A causal claim | Ask whether this came from a randomised trial or a very strong body of evidence |

| “Linked to”, “associated with”, “related to” | A correlation | Other factors may explain the pattern |

| “May help”, “could lower risk”, “might raise risk” | Early or cautious evidence | Interesting, but not settled |

| “People who did X were more likely to…” | A group comparison | Check how the groups differed before assuming cause |

A headline is not a verdict. It is an invitation to ask better questions.

What can create a false sense of cause

The biggest trap is confounding. That means a third factor helps explain both sides of the relationship. Wealth can affect diet and health outcomes. Stress can affect sleep and heart risk. Education can affect medical access and disease rates. If those factors are not fully accounted for, a study can look more dramatic than it really is.

Reverse causation is another common problem. Sometimes the outcome starts influencing the exposure rather than the other way round. People gaining weight may choose diet drinks. People feeling low may spend more time online. People becoming unwell may change how they eat, sleep or exercise. The sequence matters.

Chance matters too. Small studies can produce striking results that fail to hold up later. Weak measurements can muddy the picture. Self-reported food intake is a classic example. People forget, underestimate, overestimate and answer in ways that sound healthier than real life.

When a claim sounds too neat, these are the usual suspects:

- Confounding variables

- Reverse causation

- Selection bias

- Small sample size

- Self-reported habits

- Random chance

A strong health claim should survive those problems, not depend on readers ignoring them.

The examples that keep returning

Some stories keep resurfacing because they are easy to sell. Nutrition is full of them. One week a food looks protective, the next week it looks harmful, then a third study seems to reverse both. Much of that churn comes from observational research being reported with more certainty than it deserves.

Take a few familiar patterns in health news.

- Diet drinks and weight gain: People already concerned about weight are more likely to choose low-calorie drinks, so the drink may be travelling with the problem rather than creating it.

- Regular sleep and longer life: People with consistent sleep often differ in work schedule, chronic illness, income, alcohol use and stress, all of which can influence long-term health.

- Pain relief in pregnancy and later child outcomes: The reason a medicine was taken may matter as much as the medicine itself, which makes simple cause-and-effect claims risky.

- Social media and depression: Low mood may increase time online just as heavy use might worsen wellbeing, and both may sit alongside loneliness, sleep loss or family stress.

Even amusing examples teach the same lesson. The well-known “chocolate and Nobel Prize” link was memorable precisely because it was absurd. Countries eating more chocolate also had more Nobel winners. That did not mean chocolate created genius. It showed how easy it is to confuse a pattern with an explanation.

This matters because health headlines shape behaviour. Weak evidence can create false hope, needless fear or guilt about ordinary choices. A dramatic story may spread faster than a careful correction, especially when the claim fits what people already believe.

Study design tells you more than the headline ever will

Observational studies are useful. They help spot patterns in large groups and can raise important early warnings. Cohort studies, case-control studies and cross-sectional studies all have a place in medicine. Many public health insights start there.

Still, observational research usually cannot prove cause by itself. The groups being compared were not assigned at random, so hidden differences remain a problem even after careful statistical adjustment.

Randomised controlled trials are stronger when a real experiment is possible and ethical. Random assignment helps balance many background differences between groups. If one group receives a treatment and another does not, and the groups were otherwise similar at the start, a causal reading becomes much more reasonable.

Study design matters more than headline drama.

Even then, one trial is rarely enough. The most convincing health claims come from a wider picture: repeated findings, different populations, sensible timing, a plausible biological explanation, and results that make sense when viewed together.

What careful readers listen for

Good health journalism does not need to sound timid. It needs to sound precise. “Associated with” is not weak language. It is accurate language. “May help lower risk” is often more trustworthy than a sweeping promise.

When you read a striking claim, pause for a moment and look for the bones of the story. Was it an observational study or a trial? How large was it? Were researchers tracking people over time or taking one snapshot? Did the report mention limitations? Was the result large in absolute terms or only impressive as a percentage?

This is where many readers gain an immediate edge. A story that says risk “doubled” may still describe a very small change in actual numbers. A story that mentions only one new paper, with no context from earlier research, should not carry the same weight as a mature body of evidence.

If the story skips limitations, that is information too.

A practical reading habit that pays off

A few questions can slow the rush from headline to belief. What exactly was compared? Could another factor explain the pattern? Did the article say who funded the work? Has the same result appeared in several strong studies, or is this a lone finding with a dramatic spin?

A healthy scepticism is not cynicism. It is a calm, useful habit. It helps readers keep uncertainty in proportion and protects them from being pulled around by every fresh claim.

Careful reading also makes health news more valuable. You stop looking for miracle foods, instant dangers and single-cause stories. You start noticing the quality of evidence, the honesty of the wording and the difference between a clue and a conclusion. That is a far stronger basis for real decisions about health.